The initial novelty of generative AI has largely worn off for professional marketing teams and content creators. We are moving past the "lottery" phase—where a user enters a prompt and hopes for a usable result—into an era defined by control, repeatability, and structural consistency. When a brand launches a campaign, it doesn't need one striking image; it needs a dozen assets that share the same lighting, color palette, and character likeness. This is where the standard text-to-image workflow often breaks down, and where a more integrated approach, centered on tools like Nano Banana Pro, becomes necessary.

Achieving this level of creative discipline requires moving away from the isolated prompt box and toward a localized workflow. In the current landscape, the ability to iterate on a single concept without losing the core aesthetic is the most valuable skill an AI operator can possess.

The Problem with the Single-Prompt Workflow

Most generic AI tools are designed for the "wow" factor. They produce high-fidelity images that look incredible in a vacuum but are notoriously difficult to replicate in a sequence. If you are building a storyboard, a social media carousel, or a multi-channel ad campaign, "close enough" isn't good enough. A character's jacket changing color between frames or a background shifting from Mediterranean to Scandinavian mid-campaign breaks the immersion and signals a lack of brand polish.

The challenge is that most models are non-deterministic by nature. Even with the same prompt, the noise injection at the start of the generation process ensures a different result every time. To counter this, professional teams are shifting their focus toward specific models like Nano Banana Pro and integrated canvases that allow for more granular control over the latent space.

Leveraging Nano Banana Pro for Rapid Iteration

When we talk about Nano Banana Pro within the broader Banana AI ecosystem, the focus is on a balance between speed and architectural fidelity. In a production environment, you rarely have the luxury of waiting minutes for a single upscale. You need to see variations quickly, discard the failures, and lean into the successes.

Nano Banana Pro is optimized for this high-frequency environment. It allows creators to test compositions and lighting setups with lower latency. However, speed shouldn't be mistaken for a lack of depth. The real power comes when these generations are moved into a structured environment where they can be dissected and modified rather than just regenerated from scratch.

It is important to reset expectations here: no model, including Nano Banana, is a "set it and forget it" solution for brand identity. While the model is highly capable of following complex stylistic instructions, it still requires a human operator to anchor the visual direction through reference images and iterative prompting.

Refining Assets with an Integrated AI Image Editor

The most significant bottleneck in AI content creation is the "all or nothing" nature of generation. If an image is 90% perfect but the subject's hand is distorted or a background object is distracting, a standard generator forces you to start over. This is an inefficient use of time and credits.

Professional workflows solve this by utilizing an AI Image Editor that supports inpainting and outpainting. Instead of tossing the entire image, an operator can mask out the problematic area and prompt the AI to fill only that specific region. This maintains the integrity of the rest of the composition—the lighting remains the same, the color grading is untouched, and the character's features stay consistent.

Working within a canvas-based workflow also allows for "Image-to-Image" transformations that are more subtle than a full generation. By lowering the denoising strength, a creator can take a rough sketch or a low-fidelity stock photo and use Banana Pro to overlay a specific artistic style or texture. This move from "generating" to "editing" is what separates hobbyist output from professional-grade campaign assets.

The Importance of Style Anchoring and Seed Control

To maintain consistency across a campaign, operators must move beyond descriptive language and start using structural anchors. This involves more than just a well-crafted prompt; it involves the technical management of the generation parameters.

- Seed Management: Every AI generation starts with a random number called a seed. By "pinning" a seed, you can make minor adjustments to your prompt while keeping the underlying composition relatively stable.

- Reference Images: Using a primary asset as a style or depth reference ensures that subsequent generations in the campaign follow the same spatial logic.

- Negative Prompting: This is often underutilized but essential for consistency. By explicitly defining what should not appear—such as "high contrast," "bokeh," or specific colors—teams can keep the output within a narrow brand guideline.

Despite these tools, it is a reality that AI still struggles with certain high-frequency details. For instance, achieving perfect, legible text within an image remains a point of uncertainty. While models are improving, most professional workflows still involve exporting the AI-generated background and adding typography in traditional design software. Expecting the AI to handle 100% of the graphic design is a common pitfall that often leads to a "uncanny" or "cheap" look.

From Static Images to Dynamic Video Content

A modern campaign is rarely static. The transition from a high-quality image generated in Nano Banana Pro to a short-form video asset is now a standard part of the creative pipeline. The key here is "temporal consistency"—ensuring that the motion feels like an extension of the original image rather than a chaotic transformation.

By using the same underlying models within the Banana AI suite, creators can ensure that the visual DNA of their static assets carries over into video. This is particularly useful for social media marketers who need to turn a product shot into a 5-second eye-catching clip for Instagram or TikTok. The workflow typically involves taking the final edited image from the canvas and using it as the "init image" for a video generation, which provides the AI with a clear roadmap of the textures and colors it needs to preserve.

Quality Control: The Final Human Filter

No matter how advanced the Nano Banana model becomes, the "quality control" stage remains a human-led process. In a professional setting, this involves an audit of several key factors:

- Anatomical Accuracy: Checking for the classic AI artifacts in hands, eyes, and limbs.

- Lighting Logic: Ensuring that shadows fall consistently if multiple assets are meant to exist in the same "world."

- Brand Alignment: Does the output actually feel like the brand, or has it veered too far into the generic "AI aesthetic"?

There is a limitation to be mindful of: AI tends to gravitate toward a certain "slickness" that can feel sterile. To keep assets looking grounded and "real," experienced operators often intentionally introduce slight imperfections or use specific grain overlays in the post-processing stage. The goal is to make the tool invisible.

Building a Repeatable Asset Pipeline

For content teams, the goal is to build a "pipeline" rather than a series of one-offs. This means documenting the specific settings, prompts, and editing steps used to create a successful asset.

A repeatable pipeline using Nano Banana Pro might look like this:

- Phase 1: Conceptualization. Rapidly generate 50+ low-resolution thumbnails to find a composition that works.

- Phase 2: Anchoring. Select the best thumbnail and use it as a reference for a high-fidelity generation.

- Phase 3: Refinement. Bring the high-fidelity image into the editor to fix artifacts and adjust color balance.

- Phase 4: Expansion. Use the "pinned" settings to create variations (different aspect ratios, different background elements) for different platforms.

This systematic approach reduces the "creative fatigue" associated with fighting against a random generator. It turns the AI into a predictable part of the stack, much like a camera or a rendering engine.

The Reality of AI in Production

It is important to acknowledge that the technology is still evolving. There are moments of limitation where a prompt simply won't "take," or where the model's interpretation of a niche concept is consistently off-base. In these instances, the best operators don't keep prompting; they pivot. They might use a different base model, or they might manually composite two different generations together.

The "magic button" version of AI image generation is a marketing myth. The reality is a collaborative process between an operator's vision and the model's latent capabilities. By mastering tools like the canvas-based editor and the specific nuances of the Nano Banana variant, creators can finally stop "playing the lottery" and start producing cohesive, campaign-ready work.

Conclusion

Professionalism in the AI space isn't measured by how complex your prompts are, but by how consistent your outputs remain over time. Tools like Nano Banana Pro provide the speed necessary for modern content cycles, but the real value lies in the surrounding ecosystem of editing and control.

When you stop viewing AI as a generator and start viewing it as a malleable digital canvas, the quality of your output shifts. You move from creating "cool images" to building visual identities that can sustain a brand's narrative across multiple touchpoints. The future of creative work isn't about the AI replacing the designer; it's about the designer using the AI to execute at a scale and consistency that was previously impossible. Regardless of how powerful the underlying Banana AI models become, the vision and the final quality check will always remain firmly in the hands of the creator.

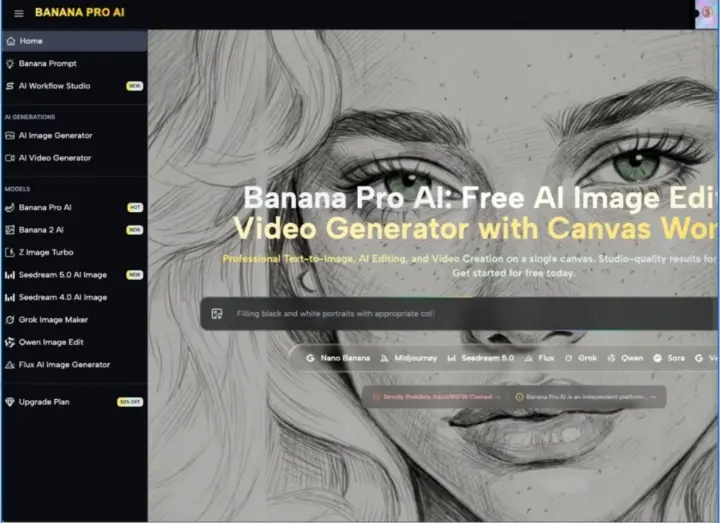

Beyond the One-Off Prompt: Achieving Campaign Consistency with Banana Pro AI