In the current era of generative media, the transition from static imagery to fluid video is often treated as a secondary process. Creators frequently pour hours into fine-tuning motion prompts, adjusting camera sliders, or iterating on seeds within video models, only to find the output marred by flickering, anatomical distortions, or muddy textures. The common instinct is to blame the video model's temporal consistency. However, a more technical audit of the workflow often reveals that the failure occurred much earlier. The quality of the "first frame"—the source asset—establishes the ceiling for everything that follows.

For indie makers and prompt-first creators, understanding that a video model is essentially a temporal extension of a single image is vital. If the source image contains structural ambiguities or low-frequency noise, the video model will attempt to "solve" those issues across dozens of frames, leading to visual artifacts. This is where an AI Photo Editor becomes more than just a retouching tool; it functions as a foundational pre-processor that defines the physical logic of the generated world before the first second of video is ever rendered.

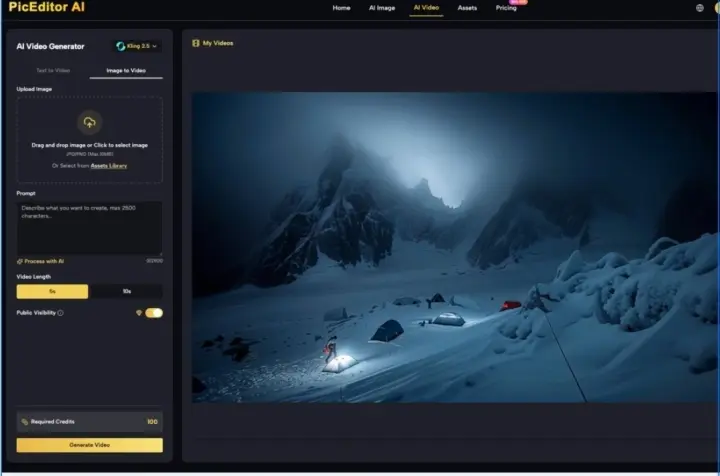

The Semantic Clarity of Source Assets

When a video model like Kling, Runway, or Veo receives an image, it performs a deep semantic analysis to determine what should move and how. If you provide a cluttered image with overlapping subjects or ill-defined background elements, the AI's motion vectors become confused. It might interpret a stray shadow as a solid object or a blurry background texture as a moving liquid.

Using an AI Image Editor to clean up these assets is a non-negotiable step for high-end production. By removing distracting objects, sharpening the boundaries between the subject and the environment, and ensuring the lighting is logically consistent, you provide the video engine with a clear map. A clean first frame allows the model to focus its computational resources on motion rather than trying to fix a broken composition in real-time.

The Limitation of Pure Generation

It is a common misconception that more detail always leads to a better video. In reality, "over-baked" images—those with excessive micro-textures or hyper-sharp noise—often result in "boiling" or "shimmering" in the final video. The video generator struggles to maintain those tiny details consistently frame-over-frame. A disciplined creator uses an AI Photo Editor to smooth out unnecessary micro-noise while retaining the structural integrity of the main subject. This creates a "temporal-friendly" asset that can be animated without the visual jitter that plagues many amateur AI shorts.

Structural Refinement and Compositional Choices

Composition in a static image is about guiding the eye; composition in a source frame for video is about defining the path of potential movement. If a character is cropped too tightly at the edges, the video model lacks the "outpainting" data necessary to move that character without creating "void" artifacts at the frame borders.

Using an AI Image Editor to expand the canvas (generative outpainting) before initiating the video process is a practical way to give the motion engine breathing room. This allows for camera pans and tilts that feel natural because the "world" already exists beyond the initial narrow view. Without this step, the AI is forced to hallucinate the surroundings on the fly, which often results in a noticeable drop in resolution or style shifts at the edges of the frame.

Object Erasure as a Motion Control Strategy

One of the most effective uses of an AI Photo Editor in a video workflow is the "Object Eraser" feature. Suppose you have a perfect cinematic shot of a futuristic street, but there is a modern-day trash can in the corner. If you leave it in, the video model might decide that the trash can should be a person walking or a vehicle moving, depending on the prompt. By erasing that object and letting the AI fill in the background beforehand, you lock down the environment. You are effectively "directing" the scene by removing variables that could lead to unwanted hallucinations.

The Role of Upscaling and Resolution Management

There is a significant gap between "high resolution" and "high fidelity." Many creators generate a 512x512 image and rely on the video model to upscale it. This is a mistake. Most high-performance video models perform better when fed a clean, high-resolution source (at least 1080p or higher).

However, there is an uncertainty here: higher resolution also increases the risk of the model seeing "ghost" patterns. If an upscaler adds too much artificial sharpening, the video model may interpret those sharpening artifacts as physical structures. The goal when using an AI Photo Editor for upscaling should be "cleanliness" over "sharpness." You want smooth gradients and clear edges, which provide a stable base for the denoising process that occurs during video generation.

Lighting and Contrast: The Silent Killers of Motion

Lighting consistency is perhaps the hardest thing for AI to maintain across time. If the first frame has high-contrast "crushed" blacks or "blown-out" highlights, the video model will struggle to determine the true texture of those areas. As the camera moves, those dark spots might flicker or morph into strange shapes because the AI doesn't have enough data to know what is "hidden" in the shadow.

An operator-led approach involves using an AI Image Editor to normalize the dynamic range of the source frame. By slightly lifting the shadows and pulling down the highlights, you provide a more "information-rich" image to the video model. Once the video is rendered, you can always re-apply a high-contrast grade in a traditional video editing suite. This "flat" source approach is similar to shooting in LOG format in traditional cinematography—it preserves the most data for the downstream process.

The Unpredictability of Physics

Even with a perfect source frame, it is important to reset expectations regarding AI physics. No matter how well you prep an image in an AI Photo Editor, the motion engine may still fail to understand gravity, momentum, or the way fabric folds. A high-quality first frame ensures the texture looks good, but it cannot guarantee that a character's arm won't merge into their torso. We must accept that we are currently in a "hybrid" era where source quality minimizes errors but does not eliminate the need for multiple "rolls" or iterations.

Integrating Face Swap and Identity Consistency

Integrating Face Swap and Identity Consistency

For creators building narratives, maintaining a consistent face across a video is the "holy grail." This process begins with a high-fidelity reference. If the face in the first frame is even slightly distorted or lacks detail, the video model will amplify those flaws.

Using a dedicated face-swap or enhancement tool within an AI Photo Editor allows you to "pin" the identity. When the video model sees a clear, high-resolution face with distinct features, it is much more likely to maintain that identity during a turn or a smile. If the source is blurry, the model will "guess" the facial structure in every frame, leading to a character that looks like a different person from one second to the next.

Workflow Summary: The Pre-Video Checklist

To maximize the output of any generative video engine, a "pre-flight" check of the source frame is essential. This is not about aesthetics alone; it is about technical viability.

- Semantic Cleanup: Use an AI Image Editor to remove any visual noise or objects that are not essential to the scene.

- Resolution Matching: Upscale the image to at least the target output resolution of the video to prevent the model from "guessing" pixel data.

- Dynamic Range Adjustment: Ensure shadows have enough detail and highlights aren't clipping.

- Canvas Expansion: Outpaint the edges if you plan on using significant camera movement.

- Identity Verification: If a human subject is involved, ensure the facial features are sharp and anatomically correct.

Practical Judgment in a Fast-Moving Space

It is easy to get caught up in the "magic" of one-click video generation. However, the most successful AI creators are those who treat the process as a craft. They understand that the AI Photo Editor is the "darkroom" where the vision is stabilized before it is set in motion.

The current landscape of tools is fragmented, and we should be cautious about claiming that any single workflow is "perfect." What works for a cinematic landscape might not work for a high-energy product ad. There is still a high degree of trial and error involved in determining which level of image "smoothness" yields the best motion.

Ultimately, the first frame is the DNA of your video. If the DNA is flawed, the resulting "organism" will be as well. By shifting focus back to the quality, composition, and clarity of the initial asset, creators can significantly reduce their render-waste and produce video content that feels intentional rather than accidental. The path to better AI video doesn't just go through better prompts—it goes through better prep.

Rethinking First Frame Quality Through AI Photo Editor